Tesla FSD has already experienced two recalls. Yet, Tesla investors insist that it is a safe system with no crashes, despite the one we reported on November 11, 2021. Perhaps other people never cared to report them, but this is no longer valid. We now have at least two more known cases of crashes involving FSD, and one of them even has a video to prove it.

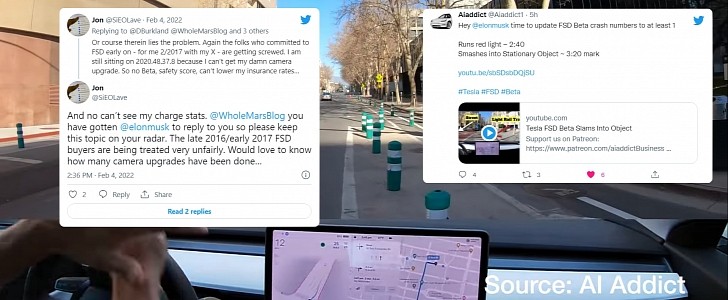

The second one we learned about came from Twitter user @SiEOLave. His Model 3 crashed on January 8. He went to Twitter on February 4 to complain that his Model 3 would only be returned to him on February 23. The Tesla customer was also unhappy that his Model X still did not get FSD – which he bought in February 2017 – because Tesla could not update the cameras soon enough.

His crash was “a corner case.” He was in a residential area turning left, and there was black ice. The car lost control and hit a fire hydrant. The FSD owner “tried disengaging at the last second or two” but was not able to avoid the wreck.

The third incident was filmed. The YouTube channel AI Addict was testing Full Self Driving Beta in its latest version: 10.10, released with the OTA (over-the-air) update 2021.44.30.15.

While the Model 3 was being driven in downtown San Jose, California, more precisely at the intersection between San Fernando Street and Almaden Boulevard. We don’t know exactly when the crash happened, but the video was published on February 5.

After turning right into San Fernando Street, the Model 3 on FSD hit plastic bollards there. In the Google Maps embedded below, we can see the one before the crosswalk is already bent. On the video, it was apparently fixed, but not for long.

The video presenter claims to have hit the brake pedals to the floor, but it did not stop the car on time to avoid hitting the bollard. That's something the Tesla customers involved with the first crash also reported. Tesla investors claim this is impossible.

AI Addict is probably getting a hard time due to the video. The tweet promoting the video was erased while we worked on this article. Fortunately, we had a screenshot of it. What it said was this: “Hey @elonmusk time to update FSD Beta crash numbers to at least 1. Runs red light ~ 2:40. Smashes into Stationary Object ~ 3:20 mark.” It would not surprise us if the footage was also taken down in the next few hours.

Luckily, there were no pedestrians or other cars involved in these crashes. However, it is valid to question how long it may take for something more serious to happen. Above all, it is pressing to argue why Tesla does not perform the tests itself. Deploying a “self-driving” software that is unable to prevent crashes is not something a company that claims to praise safety above all else should be doing.

His crash was “a corner case.” He was in a residential area turning left, and there was black ice. The car lost control and hit a fire hydrant. The FSD owner “tried disengaging at the last second or two” but was not able to avoid the wreck.

The third incident was filmed. The YouTube channel AI Addict was testing Full Self Driving Beta in its latest version: 10.10, released with the OTA (over-the-air) update 2021.44.30.15.

While the Model 3 was being driven in downtown San Jose, California, more precisely at the intersection between San Fernando Street and Almaden Boulevard. We don’t know exactly when the crash happened, but the video was published on February 5.

After turning right into San Fernando Street, the Model 3 on FSD hit plastic bollards there. In the Google Maps embedded below, we can see the one before the crosswalk is already bent. On the video, it was apparently fixed, but not for long.

The video presenter claims to have hit the brake pedals to the floor, but it did not stop the car on time to avoid hitting the bollard. That's something the Tesla customers involved with the first crash also reported. Tesla investors claim this is impossible.

AI Addict is probably getting a hard time due to the video. The tweet promoting the video was erased while we worked on this article. Fortunately, we had a screenshot of it. What it said was this: “Hey @elonmusk time to update FSD Beta crash numbers to at least 1. Runs red light ~ 2:40. Smashes into Stationary Object ~ 3:20 mark.” It would not surprise us if the footage was also taken down in the next few hours.

Luckily, there were no pedestrians or other cars involved in these crashes. However, it is valid to question how long it may take for something more serious to happen. Above all, it is pressing to argue why Tesla does not perform the tests itself. Deploying a “self-driving” software that is unable to prevent crashes is not something a company that claims to praise safety above all else should be doing.

Hey @elonmusk time to update FSD Beta crash numbers to at least 1

— Aiaddict (@Aiaddict1) February 5, 2022

Runs red light ~ 2:40

Smashes into Stationary Object ~ 3:20 mark https://t.co/HIeXsQxlZd#Tesla #FSD #Beta

Since @elonmusk first announced this in October 2021. @Tesla chat online and my local service center have no info for me. It’s BS. Oh and my 3 which was involved in a crash on 1/8 while being on Beta is expected to be back to me on 2/23. 46 days! Took 3 weeks…

— Jon (@SiEOLave) February 4, 2022