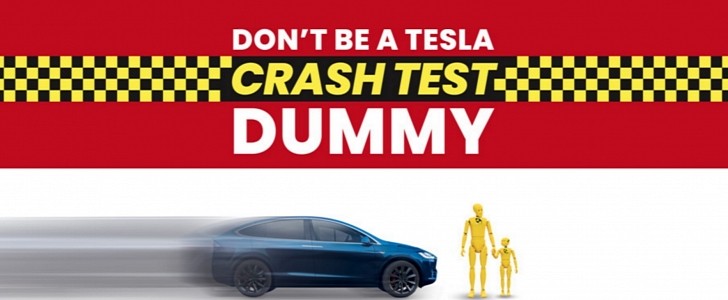

A full-page ad on the Sunday edition of The New York Times is not exactly cheap. In other words, anyone that pays for one of these means business, and that’s what the Dawn Project is targeting with the Tesla FSD (Full Self-Driving) software. It has presented its first campaign called “Don’t Be a Tesla Crash Test Dummy.”

This campaign is offering $10,000 for anyone who proves there is any other “commercial product from a Fortune 500 company that has a critical malfunction every 8 minutes.” This is what the Dawn Project’s analysis of FSD has revealed. It also demands that FSD is "removed from our roads until it has 1000 times fewer critical malfunctions."

If you have never heard of the Dawn Project, there’s nothing to be ashamed of because the idea has apparently been established recently. The project’s first campaign targets Tesla’s software with a compelling explanation of why.

According to the Dawn Project’s presentation page, its founder is tired of the way software is designed. Dan O’Dowd presents himself as “the world’s leading expert in creating software that never fails and can’t be hacked.” He would have “created the secure operating systems for projects including Boeing’s 787s, Lockheed Martin’s F-35 Fighter Jets, the Boeing B1-B Intercontinental Nuclear Bomber, and NASA’s Orion Crew Exploration Vehicle.”

Exposing what the Dawn Project aims to tackle, the page says that “computers have become a grave threat to humanity since they have been hooked up to the internet together with every safety-critical device.” The point here is not the exchange of information but rather the fact that they can interfere with “systems which people’s lives depend on.”

In that sense, the “move fast and break things” policy would be a massive liability. It could be acceptable when all it implied was losing data as its most severe consequence. When such software is connected to “cars, the power grid, water plants, and chemical factories,” any bug can be potentially catastrophic. This is why the idea is called the “Dawn Project.” Its goal is “to bring Software from the Dark of Night to the Light of Day.”

The project has analyzed “many hours” of YouTube videos and has established that FSD “commits a Critical Driving Error” about every eight minutes. According to the California DMV’s Driving Performance Evaluation, that means that the software makes things like “disobeying traffic signs or signals,” “making contact with an object when it could have been avoided,” “disobeying safety personnel or safety vehicles,” and “making a dangerous maneuver that forces others to take evasive action.”

Tesla fans will criticize the fact that the analysis was performed on YouTube videos instead of with a vehicle. The Dawn Project defends the analysis stating the videos were made by Tesla fans, not by people that do not like the company. Anyway, it may be taking care of this as we write this article to present evidence of its claims. While the project does not do that, the full-page ad on the Sunday edition of The New York Times shows it is not fooling around: it is tired of “move fast and break things,” especially when things may mean human bodies.

If you have never heard of the Dawn Project, there’s nothing to be ashamed of because the idea has apparently been established recently. The project’s first campaign targets Tesla’s software with a compelling explanation of why.

According to the Dawn Project’s presentation page, its founder is tired of the way software is designed. Dan O’Dowd presents himself as “the world’s leading expert in creating software that never fails and can’t be hacked.” He would have “created the secure operating systems for projects including Boeing’s 787s, Lockheed Martin’s F-35 Fighter Jets, the Boeing B1-B Intercontinental Nuclear Bomber, and NASA’s Orion Crew Exploration Vehicle.”

Exposing what the Dawn Project aims to tackle, the page says that “computers have become a grave threat to humanity since they have been hooked up to the internet together with every safety-critical device.” The point here is not the exchange of information but rather the fact that they can interfere with “systems which people’s lives depend on.”

In that sense, the “move fast and break things” policy would be a massive liability. It could be acceptable when all it implied was losing data as its most severe consequence. When such software is connected to “cars, the power grid, water plants, and chemical factories,” any bug can be potentially catastrophic. This is why the idea is called the “Dawn Project.” Its goal is “to bring Software from the Dark of Night to the Light of Day.”

The project has analyzed “many hours” of YouTube videos and has established that FSD “commits a Critical Driving Error” about every eight minutes. According to the California DMV’s Driving Performance Evaluation, that means that the software makes things like “disobeying traffic signs or signals,” “making contact with an object when it could have been avoided,” “disobeying safety personnel or safety vehicles,” and “making a dangerous maneuver that forces others to take evasive action.”

Tesla fans will criticize the fact that the analysis was performed on YouTube videos instead of with a vehicle. The Dawn Project defends the analysis stating the videos were made by Tesla fans, not by people that do not like the company. Anyway, it may be taking care of this as we write this article to present evidence of its claims. While the project does not do that, the full-page ad on the Sunday edition of The New York Times shows it is not fooling around: it is tired of “move fast and break things,” especially when things may mean human bodies.

Full page ad in Sunday’s NYTimes pic.twitter.com/2txGyd7g3m

— Andrew J. Hawkins ???????????????? (@andyjayhawk) January 16, 2022

When @elonmusk is wrong he always resorts to insults, remember the pedo guy? FSD is the worst trash software ever shipped by a respectable company. Green Hills Software is the operating system for B1-B nuclear bombers, F-35 fighter jets and Boeing 787s: https://t.co/QVSlI7mD5D

— Dan O'Dowd (@RealDanODowd) January 17, 2022