Hot on the heels of the news that researchers have attached a gun to a robotic dog, MIT researchers now say they’ve developed a ‘Cheetah’ capable of mimicking the speed and agility of legged robots and allowing them to jump across random gaps in terrain - in real-time.

The team says the ability of a cheetah as it dashes across an uneven field and bounds over irregular gaps in uneven terrain is a complex, monumental achievement of computing power. They say that while those sorts of bounding movements may look effortless when a cheetah accomplishes them, getting a robot to move in that fashion is a difficult prospect entirely.

They say that the four-legged robots inspired by the movement of cheetahs, dogs, and other animals have made some startling advances. However, their ability to move across natural landscapes still lags behind their living, mammalian inspiration.

Gabriel Margolis, a Ph.D. student, working with Pulkit Agrawal, a professor in the Computer Science and Artificial Intelligence Laboratory (CSAIL) at MIT, says existing methods researchers are using to incorporate a system of live ‘vision’ into models for legged locomotion just won’t do.

“In those settings, you need to use vision in order to avoid failure. For example, stepping in a gap is difficult to avoid if you can’t see it,” Margolis says. “Although there are some existing methods for incorporating vision into legged locomotion, most of them aren’t really suitable for use with emerging agile robotic systems.”

Margolis says he and his team members at CSAIL have developed a system that improves the ability of their cheetah robot to jump across irregular gaps in the terrain. The control system is comprised of two parts - one that processes real-time input from a video camera - another that translates such information into instructions for how the robot should move its body.

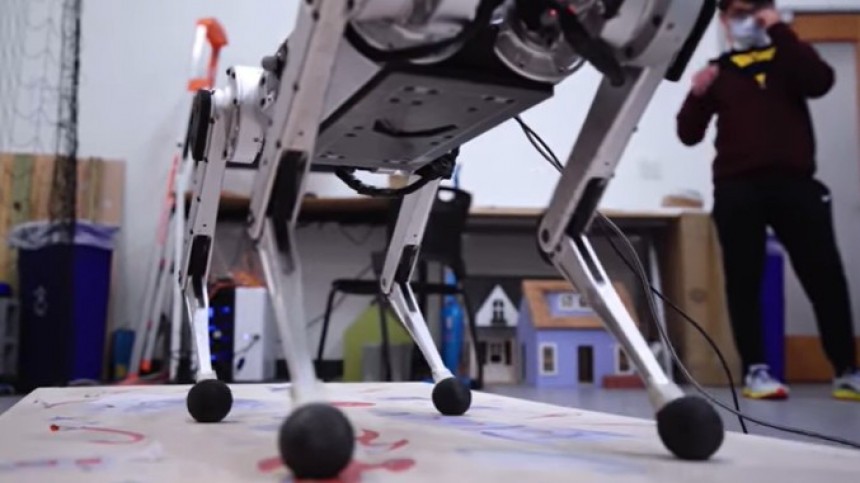

The researchers tested their latest system on the MIT “mini cheetah”, a powerful and agile small robot built in Sangbae Kim’s mechanical engineering laboratory.

The system differs from current methods for controlling four-legged robots in that it doesn’t require the terrain to be mapped ahead of time, and that lets the robots navigate autonomously.

The researchers say the development could, in the future, allow robots to charge off on their own to take on missions like emergency responses or to climb a flight of stairs for deliveries.

Or presumably, to carry a rifle into combat across a rugged field.

The controller algorithm they’ve developed converts the robot’s functional state into a set of actions for it to follow, and unlike blind controllers which do not incorporate vision - robust and effective and allows robots to walk over discontinuous terrain.

It works like this: The robot’s camera captures depth images of the upcoming terrain, which are speedily fed to a ‘high-level controller’ and blended with information detailing the current positions of a robot’s joint angles or body orientation. This high-level controller is essentially a neural network capable of “learning” from experience.

“The hierarchy, including the use of this low-level controller, enables us to constrain the robot’s behavior so it is more well-behaved,” Margolis says. “With this low-level controller, we are using well-specified models that we can impose constraints on, which isn’t usually possible in a learning-based network. The mini cheetah is a great platform because it is modular and made mostly from parts that you can order online, so if we wanted a new battery or camera, it was just a simple matter of ordering it from a regular supplier.”.

Their system outperformed others that only use one controller, and the mini cheetah successfully crossed 90 percent of the terrains.

“One novelty of our system is that it does adjust the robot’s gait. If a human were trying to leap across a really wide gap, they might start by running really fast to build up speed and then they might put both feet together to have a really powerful leap across the gap. In the same way, our robot can adjust the timings and duration of its foot contacts to better traverse the terrain,” Margolis says.

He adds that, while their control scheme works in the lab, it’s still far from being deployed in the real world.

They say that the four-legged robots inspired by the movement of cheetahs, dogs, and other animals have made some startling advances. However, their ability to move across natural landscapes still lags behind their living, mammalian inspiration.

Gabriel Margolis, a Ph.D. student, working with Pulkit Agrawal, a professor in the Computer Science and Artificial Intelligence Laboratory (CSAIL) at MIT, says existing methods researchers are using to incorporate a system of live ‘vision’ into models for legged locomotion just won’t do.

Margolis says he and his team members at CSAIL have developed a system that improves the ability of their cheetah robot to jump across irregular gaps in the terrain. The control system is comprised of two parts - one that processes real-time input from a video camera - another that translates such information into instructions for how the robot should move its body.

The researchers tested their latest system on the MIT “mini cheetah”, a powerful and agile small robot built in Sangbae Kim’s mechanical engineering laboratory.

The system differs from current methods for controlling four-legged robots in that it doesn’t require the terrain to be mapped ahead of time, and that lets the robots navigate autonomously.

Or presumably, to carry a rifle into combat across a rugged field.

The controller algorithm they’ve developed converts the robot’s functional state into a set of actions for it to follow, and unlike blind controllers which do not incorporate vision - robust and effective and allows robots to walk over discontinuous terrain.

It works like this: The robot’s camera captures depth images of the upcoming terrain, which are speedily fed to a ‘high-level controller’ and blended with information detailing the current positions of a robot’s joint angles or body orientation. This high-level controller is essentially a neural network capable of “learning” from experience.

“The hierarchy, including the use of this low-level controller, enables us to constrain the robot’s behavior so it is more well-behaved,” Margolis says. “With this low-level controller, we are using well-specified models that we can impose constraints on, which isn’t usually possible in a learning-based network. The mini cheetah is a great platform because it is modular and made mostly from parts that you can order online, so if we wanted a new battery or camera, it was just a simple matter of ordering it from a regular supplier.”.

Their system outperformed others that only use one controller, and the mini cheetah successfully crossed 90 percent of the terrains.

“One novelty of our system is that it does adjust the robot’s gait. If a human were trying to leap across a really wide gap, they might start by running really fast to build up speed and then they might put both feet together to have a really powerful leap across the gap. In the same way, our robot can adjust the timings and duration of its foot contacts to better traverse the terrain,” Margolis says.

He adds that, while their control scheme works in the lab, it’s still far from being deployed in the real world.